TL;DR

1. API rate limiting is crucial for protecting your services from abuse, ensuring stable performance, and maintaining fair usage policies.

2. It prevents overload, defends against DoS attacks, helps control costs, and supports API monetization models by enforcing quotas.

3. Key algorithms include Fixed Window, Sliding Window Log, Sliding Window Counter, Token Bucket, and Leaky Bucket, each with distinct pros and cons.

4. DigitalAPI offers robust API management solutions that include advanced rate limiting capabilities, ensuring your APIs are secure, stable, and scalable.

Explore DigitalAPI's API management platform today. Book a Demo!

APIs are the lifeblood of modern digital ecosystems, enabling seamless communication between services, applications, and users. However, their very accessibility also presents significant challenges. Uncontrolled access can quickly lead to resource exhaustion, degraded performance, and even malicious attacks like Denial-of-Service (DoS). Imagine your robust backend infrastructure buckling under an unexpected surge of requests, leaving legitimate users stranded and critical services unresponsive. This is where API management and rate limiting step in, acting as an essential guardian. It's not just a technical safeguard; it's a strategic necessity to ensure stability, fairness, and the longevity of your API offerings. Mastering its implementation is paramount for any API provider.

What is API Rate Limiting?

API rate limiting is a strategy for controlling the number of requests a user or client can make to an API within a defined time window. Its primary goal is to prevent the overuse of resources, either accidental or malicious, ensuring consistent performance and availability for all legitimate users. Think of it as a traffic cop for your digital endpoints, directing and sometimes slowing down the flow to keep the system running smoothly.

This control mechanism sets a cap on how many calls can be made per second, minute, or hour, preventing individual clients from monopolizing server resources. Without effective rate limiting, a single rogue application or a coordinated attack could overwhelm your servers, leading to downtime, poor user experience, and potential data breaches. It's a fundamental component of robust API security and responsible resource management.

Why is API Rate Limiting Essential?

Implementing API rate limiting isn't just a good practice; it's a critical component of a healthy and scalable API ecosystem. Here’s why it's indispensable for modern applications and services:

1. Prevents Abuse and Attacks

- Denial of Service (DoS) Attacks: By limiting the number of requests per client, you can mitigate the impact of DoS and Distributed DoS (DDoS) attacks that aim to overload your servers and make your services unavailable.

- Brute-Force Attacks: Rate limiting makes it harder for attackers to guess API authentication credentials or enumerate resources by restricting the number of attempts they can make in a short period.

- Data Scraping: It prevents bots from rapidly scraping large amounts of data from your APIs, protecting your intellectual property and reducing server load.

2. Ensures Fair Usage and Stability

- Resource Allocation: Guarantees that no single user or application consumes a disproportionate share of server resources, ensuring that your API remains responsive and available for everyone.

- Performance Consistency: By controlling request volume, rate limiting helps maintain predictable API performance, preventing spikes in load that can degrade response times for all users.

- Operational Stability: Protects your backend infrastructure from becoming overwhelmed, leading to fewer crashes, errors, and manual interventions.

3. Manages Costs and Supports Monetization

- Infrastructure Cost Control: Reduces the need to over-provision servers to handle unpredictable traffic spikes, leading to more efficient use of computing resources and lower operational costs.

- Tiered Access: Enables the implementation of tiered API access, where different subscription levels or user types are granted different rate limits. This is fundamental for implementing billing quotas and SLAs, making it a powerful tool for effective API monetization.

Key Concepts and Metrics in Rate Limiting

Before diving into implementation, it's crucial to understand the fundamental concepts and metrics involved in API rate limiting:

- Rate Limit: The maximum number of requests allowed within a specific time window (e.g., 100 requests per minute).

- Time Window: The duration over which the rate limit is applied (e.g., 1 minute, 1 hour, 1 day).

- Burst Limit: Allows for a temporary spike in requests above the steady rate limit. For instance, an API might allow 100 requests/minute but also permit 10 requests within a single second, even if that second's activity would exceed the minute's average.

- Quota: A total number of requests allowed over a longer period, often daily or monthly, separate from the rolling rate limit. For example, 10,000 requests per month, regardless of the minute-by-minute rate.

- Rate Limiting Key: The identifier used to track requests. Common keys include:

- IP Address: Limits requests from a specific IP address.

- API Key/Token: Limits requests associated with a specific API key or access token.

- User ID: Limits requests made by an authenticated user.

- Client ID: Limits requests from a specific application.

- Rate Limit Headers: Standard HTTP headers returned in responses to inform clients about their current rate limit status. Common headers include:

X-RateLimit-Limit: The maximum number of requests allowed in the current window.X-RateLimit-Remaining: The number of requests remaining in the current window.X-RateLimit-Reset: The time (usually in UTC epoch seconds) when the current rate limit window resets.Retry-After: Sent with a 429 Too Many Requests response, indicating how long the client should wait before making another request.

Common API Rate Limiting Algorithms/Strategies

Different algorithms offer varying levels of precision, fairness, and resource consumption. Choosing the right one depends on your specific needs and infrastructure.

1. Fixed Window Counter

- How it works: A fixed time window (e.g., 60 seconds) is defined. A counter tracks requests within that window. Once the limit is reached, all subsequent requests until the window resets are denied.

- Pros: Simple to implement and understand. Low resource usage.

- Cons: Can suffer from "bursty" traffic at the edge of the window. For example, if the limit is 100 requests/minute, a client could make 100 requests in the last second of a window and another 100 in the first second of the next, totaling 200 requests in two seconds.

2. Sliding Window Log

- How it works: Stores a timestamp for every request made by a client. To check if a request is allowed, it counts all timestamps within the last `N` seconds/minutes (the sliding window). If the count exceeds the limit, the request is denied.

- Pros: Very accurate and fair. Avoids the "bursty" issue of the fixed window.

- Cons: High memory consumption, as it needs to store timestamps for every request. Can be computationally expensive for high-volume APIs.

3. Sliding Window Counter

- How it works: This is a hybrid approach. It divides the time into fixed windows and keeps a counter for each. When a new request arrives, it calculates an interpolated count for the sliding window by combining the current window's count with a weighted fraction of the previous window's count.

- Pros: Good balance between accuracy and resource usage. Mitigates the burst problem better than Fixed Window, but less memory intensive than Sliding Window Log.

- Cons: More complex to implement than Fixed Window. Less precise than Sliding Window Log.

4. Token Bucket

- How it works: A "bucket" with a fixed capacity is filled with "tokens" at a constant rate. Each API request consumes one token. If the bucket is empty, the request is denied. The bucket can temporarily hold more tokens than the refill rate, allowing for bursts up to the bucket's capacity.

- Pros: Allows for bursts of traffic while still enforcing an average rate. Simple to implement.

- Cons: Can allow a large burst after a period of inactivity, which might still strain resources.

5. Leaky Bucket

- How it works: Requests are added to a queue (the "bucket") and processed at a constant rate ("leak rate"). If the queue overflows, new requests are denied.

- Pros: Smooths out bursty traffic, processing requests at a consistent rate. Excellent for protecting backend services from sudden spikes.

- Cons: Requests can be delayed if the queue fills up, increasing latency. Denies requests when the bucket is full, even if the average rate over a longer period is acceptable. More suited for API throttling than strict rate limiting.

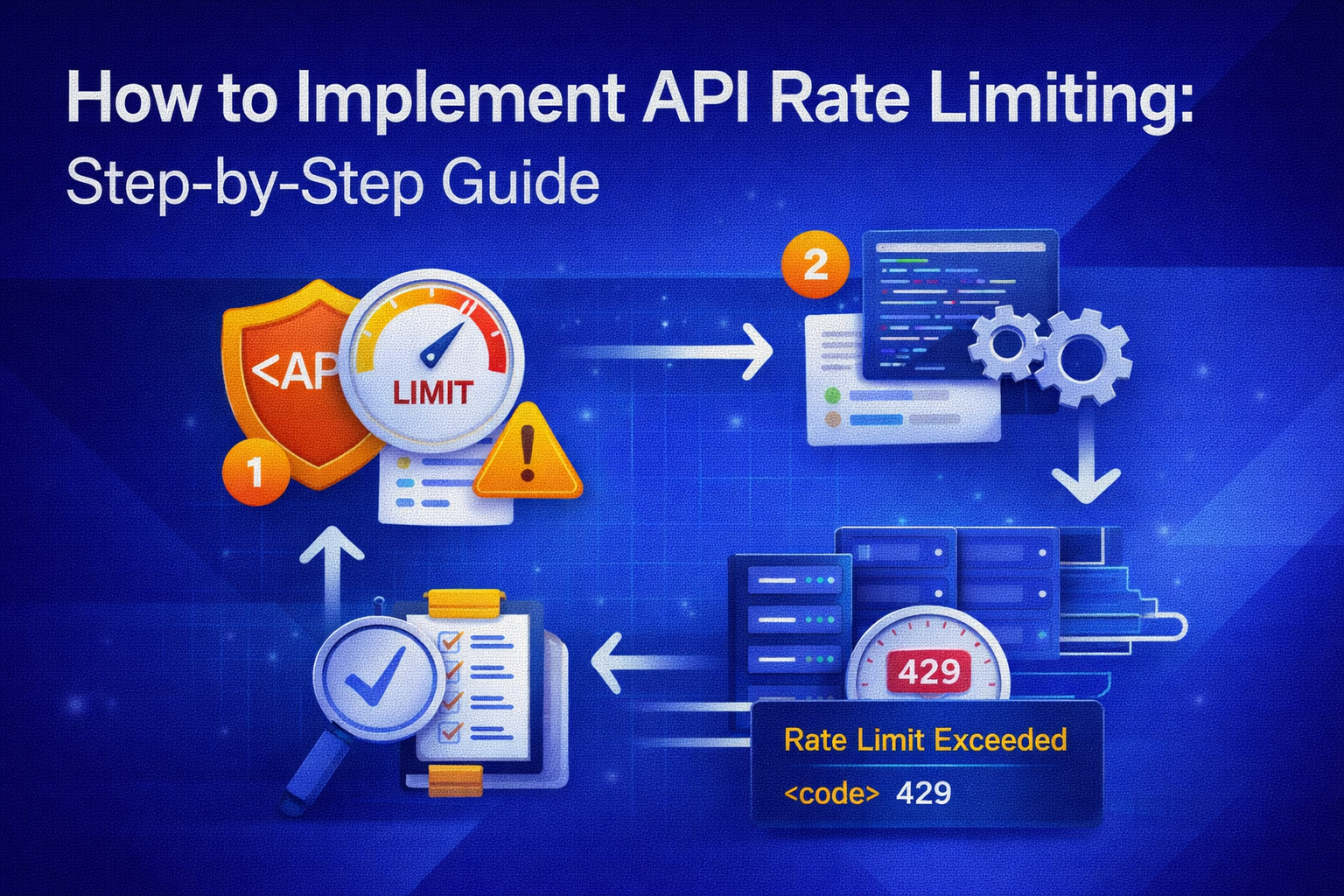

Step-by-Step Guide to Implement API Rate Limiting

Implementing effective API rate limiting requires careful planning and execution. Follow these steps for a robust setup:

Step 1: Define Your Goals and Policies

Before writing any code, clearly articulate why you need rate limiting and what you want to achieve. This includes defining specific API management policies.

- Identify Critical Resources: Which APIs or endpoints are most susceptible to abuse or resource exhaustion? (e.g., authentication endpoints, data-intensive queries).

- Determine Limits: What are reasonable request limits for different types of users (anonymous, free tier, premium, internal)? Consider requests per second, minute, hour, and possibly daily/monthly quotas.

- Business Impact: How do these limits align with your business model, API monetization strategies, and user experience goals?

- Error Handling: What should happen when a limit is exceeded? (e.g., return a 429 status code with a

Retry-Afterheader).

Step 2: Choose an Algorithm

Based on your goals and resource constraints, select the most suitable rate limiting algorithm from the ones discussed above.

- Simplicity and Low Overhead: Fixed Window Counter.

- Fairness and Burst Resistance (at a cost): Sliding Window Log or Sliding Window Counter.

- Burst Tolerance with Average Rate Enforcement: Token Bucket.

- Traffic Smoothing: Leaky Bucket (more for throttling).

Step 3: Identify Rate Limiting Keys

How will you uniquely identify a client to apply the rate limit?

- API Key/Token: The most common method for authenticated access. This requires robust API key management.

- IP Address: Suitable for anonymous users but can be problematic behind NATs or proxies, where many users share an IP.

- User ID: For authenticated users, this ensures limits are applied per user regardless of their changing IP or multiple devices.

- Client ID: For applications making requests on behalf of users.

Step 4: Select Your Implementation Point

Where in your architecture will you enforce rate limits?

- API Gateway: This is often the preferred method. An API Gateway (like AWS API Gateway, Kong, Apigee, or Gravitee) acts as a single entry point for all API requests. It can enforce rate limits before requests even reach your backend services, providing centralized control and reducing load on your application servers. Many best API gateways offer built-in rate limiting features.

- Application Layer: Implementing rate limiting directly within your application code. This offers fine-grained control for specific endpoints or business logic but increases complexity and distributes the logic across your services. It might be suitable for microservices that don't sit behind a common gateway or for very specific, intricate rate limiting rules.

- Load Balancer/Reverse Proxy: Some load balancers (e.g., NGINX) offer basic rate limiting capabilities, primarily based on IP addresses, suitable for initial defense.

Step 5: Implement the Logic

This is where you translate your chosen algorithm and policies into code or configuration.

- Using an API Gateway: Configure rate limiting policies directly within your gateway's management interface. You'll specify the limit, time window, and the key (e.g., API key, IP address) to track. The gateway handles the counting and enforcement.

- In Application Code: You'll need to store the request count and timestamps for each key (e.g., in a Redis cache). For each incoming request:

- Retrieve the current count/timestamps for the client's key.

- Apply the logic of your chosen algorithm to determine if the request is allowed.

- If allowed, increment the count/add timestamp and proceed with the request.

- If denied, return an appropriate error response.

Step 6: Handle Exceeded Limits Gracefully

When a client exceeds their rate limit, your API should respond clearly and informatively.

- HTTP 429 Too Many Requests: This is the standard HTTP status code for rate limit violations.

- Rate Limit Headers: Include

X-RateLimit-Limit,X-RateLimit-Remaining, andX-RateLimit-Resetin the response to help clients understand their status. - Retry-After Header: Crucially, include a

Retry-Afterheader telling the client exactly how many seconds they should wait before retrying. This prevents clients from continuously hitting your API when blocked, reducing unnecessary load. Provide clear instructions for clients on how to handle the "Rate Limit Exceeded" response. - Clear Error Message: A simple, descriptive message in the response body explaining the error.

Step 7: Monitor and Adjust

Rate limiting is not a "set it and forget it" task. Continuous comprehensive API monitoring is essential.

- Track Violations: Monitor how often rate limits are being hit. High numbers might indicate misconfigured limits, legitimate high demand, or potential attacks.

- API Performance: Observe if rate limiting is impacting legitimate user experience (e.g., too restrictive) or if performance issues still occur despite limits (e.g., limits are too high).

- Logs and Metrics: Integrate rate limiting metrics into your logging and observability platforms. This provides valuable insights into API usage patterns and helps identify anomalies.

- Iterative Refinement: Be prepared to adjust your rate limits based on usage patterns, feedback, and performance data.

Step 8: Communicate Policies Clearly

Transparency is key for developers using your APIs.

- Documentation: Publish your rate limiting policies in your API developer portal. Clearly state the limits, what triggers a 429 response, and how clients should handle it.

- Examples: Provide code examples for how clients should implement exponential backoff and retry logic when encountering rate limit errors.

Tools and Platforms for API Rate Limiting

While you can implement rate limiting from scratch, leveraging existing tools and platforms is often more efficient and reliable:

- API Gateways: As mentioned, most leading API gateways (e.g., AWS API Gateway, Azure API Management, Google Apigee, Kong Gateway, Gravitee API Management) offer powerful, configurable rate limiting as a built-in feature. They are ideal for centralized enforcement.

- Cloud Load Balancers: Services like AWS Application Load Balancer (ALB) or Google Cloud Load Balancing can provide basic rate limiting, often based on IP.

- Redis: A popular choice for storing rate limit counters and timestamps in application-level implementations due to its speed and in-memory data structures.

- NGINX: Can be configured as a reverse proxy with sophisticated rate limiting rules.

- Specialized Libraries/Middleware: Many programming languages have libraries or middleware (e.g.,

express-rate-limitfor Node.js,Flask-Limiterfor Python) that simplify application-level rate limiting.

Best Practices for API Rate Limiting

- Start with Reasonable Defaults: Don't make limits too restrictive initially, as it can hinder adoption. Start with generous limits and tighten them as you gather data.

- Granular Control: Apply different limits based on endpoint, HTTP method, user type, or subscription tier.

- Consider Bursts: Allow for some burst capacity to accommodate legitimate spikes in traffic without immediately denying requests.

- Client-Side Backoff: Encourage (and possibly enforce) client applications to implement exponential backoff and retry strategies when encountering 429 responses.

- Clear Error Messages: Always provide descriptive error messages and include rate limit headers.

- Distributed Systems: In a microservices architecture, ensure rate limiting logic is consistent across services or centralized at an API Gateway to prevent inconsistent enforcement.

- Edge Protection: Implement rate limiting as close to the edge of your network as possible (e.g., at the API Gateway) to shed bad traffic before it hits your core infrastructure.

- Monitor and Alert: Continuously monitor rate limit breaches and system performance. Set up alerts for sustained violations or unexpected drops in API usage.

Challenges and Considerations

Even with a clear guide, implementing API rate limiting can present challenges:

- Identifying Unique Clients: Proxies, NATs, and VPNs can make identifying individual clients by IP address difficult. Combining IP with API keys or user tokens offers better accuracy.

- Distributed Environment: In a highly distributed microservices setup, maintaining a consistent, synchronized rate limit counter across multiple instances can be complex. Centralized solutions like Redis or an API Gateway are crucial here.

- Fairness vs. Performance: Striking the right balance between fair usage for all clients and not overly burdening the system with complex rate limiting calculations.

- False Positives: Overly aggressive limits can block legitimate users, leading to a poor user experience.

- Evolving Usage Patterns: API usage can change rapidly. What's a good limit today might be too restrictive or too lenient tomorrow, necessitating continuous monitoring and adjustment.

Conclusion

Implementing API rate limiting is a non-negotiable aspect of modern API management. It's a powerful tool for safeguarding your infrastructure, ensuring fair resource allocation, and maintaining a high quality of service for your users. By understanding the core concepts, choosing the right algorithms, and following a step-by-step implementation plan, you can effectively protect your APIs from abuse, control costs, and even create differentiated service tiers. Remember, rate limiting is an ongoing process—one that requires continuous monitoring, clear communication, and iterative refinement to adapt to changing usage patterns and security threats. With a thoughtful approach, you can build a resilient API ecosystem that serves both your business and your developer community effectively.

FAQs

1. What is API rate limiting?

API rate limiting is a control mechanism that restricts the number of requests a user or client can make to an API within a specific time frame. Its main purpose is to prevent resource overuse, protect against malicious attacks like DoS, ensure fair access for all users, and maintain the stability and performance of the API service.

2. Why is API rate limiting important?

API rate limiting is crucial for several reasons: it defends against DoS and brute-force attacks, ensures fair access to resources for all legitimate users, prevents server overload, helps manage infrastructure costs, and enables API monetization strategies by enforcing usage quotas for different service tiers. Without it, APIs are vulnerable to abuse and performance degradation.

3. What are the common algorithms for API rate limiting?

Common algorithms include: Fixed Window Counter (simple, but susceptible to burst issues at window edges), Sliding Window Log (highly accurate but resource-intensive), Sliding Window Counter (a good compromise between accuracy and efficiency), Token Bucket (allows for bursts while maintaining an average rate), and Leaky Bucket (smoothes out traffic, more for throttling). The best choice depends on specific requirements for fairness, burst tolerance, and resource usage.

4. Where should API rate limiting be implemented?

The most common and recommended place to implement API rate limiting is at the API Gateway. This provides centralized control, offloads the task from backend services, and allows for enforcement before requests consume significant application resources. It can also be implemented at the application layer for fine-grained, business-logic-driven limits, or at a load balancer/reverse proxy for basic, network-level protection.

5. How do I handle "Rate Limit Exceeded" responses?

When a client exceeds their rate limit, your API should respond with an HTTP `429 Too Many Requests` status code. The response should include `X-RateLimit-Limit`, `X-RateLimit-Remaining`, and `X-RateLimit-Reset` headers to inform the client of their status. Most importantly, an `Retry-After` header should be provided, instructing the client how many seconds to wait before attempting another request. Clear documentation on handling these responses, including implementing exponential backoff, is also essential.

You’ve spent years battling your API problem. Give us 60 minutes to show you the solution.

.svg)

.avif)